Building Trust: Authentication, Authorization, and Audit Logging

← Part 3: Dynamic Mapping Engine

Trust, But Verify (Then Log Everything)

In healthcare IT, "it works" isn't enough. You need to prove:

Who accessed data

What they accessed

When they accessed it

Why (in some cases)

That you can reconstruct what happened 6 months ago

This isn't paranoia—it's HIPAA compliance. And it's the difference between a minor incident and a career-ending breach.

Authentication: Keycloak Integration

I needed enterprise-grade authentication without building it myself. Enter Keycloak.

Why Keycloak?

✅ Battle-tested: Used by Red Hat and many others

✅ SSO/SAML/OIDC: Hospitals/healthcare companies love their SAML

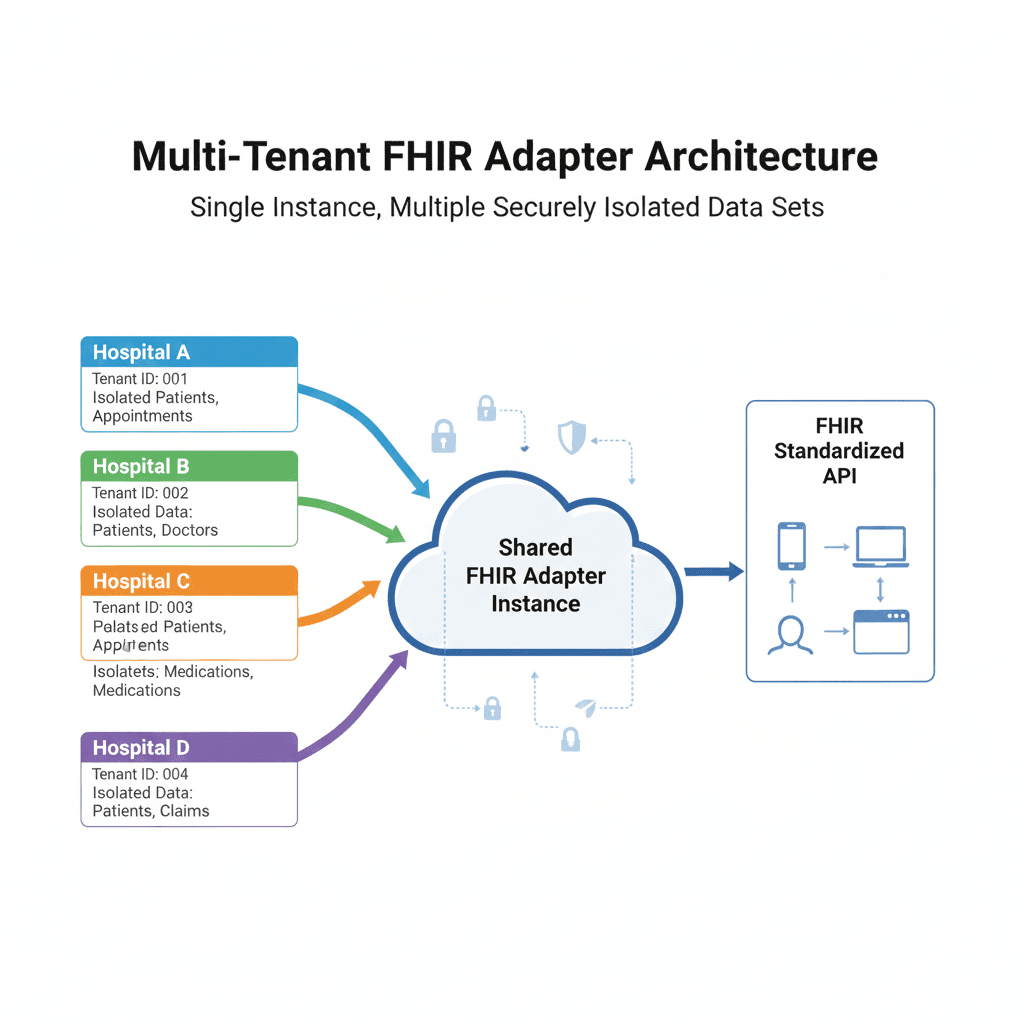

✅ Realm isolation: Perfect for multi-tenancy

✅ Open source: No vendor lock-in

Each tenant gets their own Keycloak realm. Hospital A's users can't log into Hospital B's realm. Period.

The JWT Flow

1. User → Keycloak: "username + password"

2. Keycloak → User: JWT token

3. User → FHIR Adapter: "GET /fhir/Patient/123" + JWT header

4. Adapter → Keycloak: "Is this token valid?"

5. Keycloak → Adapter: "Yes, user=john, tenant=42, roles=[fhir-read]"

6. Adapter: Process request (if authorized)

JWT Validation in Rust

I built an async middleware that validates every request:

pub async fn auth_middleware(

State(state): State<AppState>,

mut req: Request,

next: Next,

) -> Result<Response, AppError> {

// 1. Extract JWT from Authorization header

let token = extract_bearer_token(&req)

.ok_or_else(|| AppError::Unauthorized("Missing token"))?;

// 2. Validate signature and expiration with Keycloak

let claims = state.keycloak_client

.validate_token(&token)

.await

.map_err(|e| {

tracing::warn!("Token validation failed: {}", e);

AppError::Unauthorized("Invalid token")

})?;

// 3. Extract critical claims

let auth_context = AuthContext {

user_id: claims.sub,

client_id: claims.client_id

.ok_or_else(|| AppError::Unauthorized("Missing client_id"))?,

roles: claims.realm_access.roles,

email: claims.email,

};

// 4. Inject into request for downstream handlers

req.extensions_mut().insert(auth_context);

Ok(next.run(req).await)

}

fn extract_bearer_token(req: &Request) -> Option<String> {

req.headers()

.get("Authorization")?

.to_str()

.ok()?

.strip_prefix("Bearer ")

.map(|s| s.to_string())

}

JWKS Caching

Validating JWTs requires Keycloak's public keys (JWKS). Fetching them on every request would be slow.

I implemented a TTL-based JWKS cache:

pub struct KeycloakClient {

jwks_cache: Arc<Mutex<Option<(JwkSet, Instant)>>>,

jwks_cache_ttl: Duration,

server_url: String,

realm: String,

}

impl KeycloakClient {

async fn get_jwks(&self) -> Result<JwkSet> {

// Check cache

{

let cache = self.jwks_cache.lock().unwrap();

if let Some((jwks, cached_at)) = cache.as_ref() {

if cached_at.elapsed() < self.jwks_cache_ttl {

return Ok(jwks.clone());

}

}

}

// Fetch from Keycloak

let url = format!(

"{}/realms/{}/protocol/openid-connect/certs",

self.server_url, self.realm

);

let jwks: JwkSet = reqwest::get(&url)

.await?

.json()

.await?;

// Update cache

let mut cache = self.jwks_cache.lock().unwrap();

*cache = Some((jwks.clone(), Instant::now()));

Ok(jwks)

}

}

Performance: 1st request ~50ms (fetches JWKS), subsequent requests ~2ms (cached).

Authorization: Role-Based Access Control

Authentication answers "who are you?" Authorization answers "what can you do?"

I defined three roles:

fhir-read: Read resourcesfhir-write: Create/update resourcesfhir-admin: Admin panel access, configuration changes

impl AuthContext {

pub fn has_role(&self, role: &str) -> bool {

self.roles.iter().any(|r| r == role)

}

pub fn require_role(&self, role: &str) -> Result<(), AppError> {

if self.has_role(role) {

Ok(())

} else {

Err(AppError::Forbidden(

format!("Role '{}' required", role)

))

}

}

}

Usage in handlers:

pub async fn patient_read(

State(state): State<AppState>,

auth: AuthContext, // Injected by middleware

Path(id): Path<String>,

) -> Result<Json<Patient>, AppError> {

// Check authorization

auth.require_role("fhir-read")?;

// Fetch patient (automatically scoped to auth.client_id)

let patient = state.fetch_patient(&auth.client_id, &id).await?;

Ok(Json(patient))

}

Audit Logging: The Paper Trail

Every request gets logged. Not just errors—everything.

What We Log

pub struct AuditLogParams {

pub resource_type: String, // "Patient"

pub resource_id: Option<String>, // "12345"

pub operation: AuditOperation, // Read, Create, Update, Delete, Search

pub user_id: String, // From JWT

pub client_id: String, // Tenant ID

pub ip_address: Option<String>,

pub user_agent: Option<String>,

pub request_id: String, // UUID for correlation

pub success: bool,

pub error_message: Option<String>,

pub request_body: Option<JsonValue>, // Sanitized!

pub response_body: Option<JsonValue>, // Sanitized!

}

pub enum AuditOperation {

Read,

Create,

Update,

Delete,

Search,

}

The Audit Log Flow

Every handler follows this pattern:

pub async fn patient_create(

State(state): State<AppState>,

auth: AuthContext,

Json(patient): Json<Patient>,

) -> Result<Response, AppError> {

let request_id = uuid::Uuid::new_v4().to_string();

// Prepare audit log params

let mut audit_params = AuditLogParams {

resource_type: "Patient".to_string(),

resource_id: None,

operation: AuditOperation::Create,

user_id: auth.user_id.clone(),

client_id: auth.client_id.clone(),

request_id: request_id.clone(),

success: false, // Will be updated

error_message: None,

request_body: serde_json::to_value(&patient).ok(),

..Default::default()

};

// Execute operation

let result = async {

let id = state.create_patient(&auth.client_id, &patient).await?;

Ok::<_, AppError>(id)

}.await;

// Update audit params based on result

match result {

Ok(id) => {

audit_params.success = true;

audit_params.resource_id = Some(id.clone());

// Log success

if let Err(e) = state.audit_logger.log(audit_params).await {

tracing::error!("Failed to log audit: {}", e);

}

Ok((StatusCode::CREATED, Json(json!({"id": id}))).into_response())

}

Err(e) => {

audit_params.error_message = Some(e.to_string());

// Log failure

if let Err(log_err) = state.audit_logger.log(audit_params).await {

tracing::error!("Failed to log audit: {}", log_err);

}

Err(e)

}

}

}

Critical: Even if the request fails, we log it. Especially if it fails.

Sanitizing Sensitive Data

We log request/response bodies, but we sanitize PHI (Protected Health Information):

fn sanitize_for_audit(value: &JsonValue) -> JsonValue {

match value {

JsonValue::Object(map) => {

let mut sanitized = serde_json::Map::new();

for (key, val) in map {

let sanitized_val = if SENSITIVE_FIELDS.contains(&key.as_str()) {

json!("[REDACTED]")

} else {

sanitize_for_audit(val)

};

sanitized.insert(key.clone(), sanitized_val);

}

JsonValue::Object(sanitized)

}

JsonValue::Array(arr) => {

JsonValue::Array(arr.iter().map(sanitize_for_audit).collect())

}

_ => value.clone(),

}

}

const SENSITIVE_FIELDS: &[&str] = &[

"ssn", "social_security", "password", "token",

"birthDate", "deceased", "multipleBirth" // Some PHI

];

Audit Log Storage

Audit logs go to a separate database from application data:

CREATE TABLE audit_logs (

id BIGINT AUTO_INCREMENT PRIMARY KEY,

resource_type VARCHAR(50),

resource_id VARCHAR(255),

operation ENUM('read', 'create', 'update', 'delete', 'search'),

user_id VARCHAR(255) NOT NULL,

client_id VARCHAR(100) NOT NULL,

ip_address VARCHAR(45),

user_agent TEXT,

request_id VARCHAR(36) NOT NULL,

success BOOLEAN NOT NULL,

error_message TEXT,

request_body JSON,

response_body JSON,

created_at TIMESTAMP NOT NULL DEFAULT CURRENT_TIMESTAMP,

INDEX idx_client_id (client_id),

INDEX idx_user_id (user_id),

INDEX idx_request_id (request_id),

INDEX idx_created_at (created_at)

);

Retention: We keep logs for 7 years (HIPAA requirement).

Rate Limiting: Defense Against Abuse

Without rate limiting, one malicious (or buggy) client could DOS the entire system.

I implemented per-tenant rate limiting using a token bucket algorithm:

pub struct RateLimiter {

buckets: Arc<Mutex<HashMap<String, TokenBucket>>>,

}

struct TokenBucket {

tokens: f64,

last_refill: Instant,

capacity: f64,

refill_rate: f64, // tokens per second

}

impl RateLimiter {

pub async fn check_rate_limit(

&self,

client_id: &str,

tokens_needed: f64,

) -> Result<RateLimitInfo, AppError> {

let mut buckets = self.buckets.lock().unwrap();

let bucket = buckets.entry(client_id.to_string())

.or_insert_with(|| TokenBucket {

tokens: 100.0, // Initial capacity

last_refill: Instant::now(),

capacity: 100.0,

refill_rate: 10.0, // 10 req/s sustained

});

// Refill tokens based on elapsed time

let elapsed = bucket.last_refill.elapsed().as_secs_f64();

bucket.tokens = (bucket.tokens + elapsed * bucket.refill_rate)

.min(bucket.capacity);

bucket.last_refill = Instant::now();

// Check if we have enough tokens

if bucket.tokens >= tokens_needed {

bucket.tokens -= tokens_needed;

Ok(RateLimitInfo {

limit: bucket.capacity as i32,

remaining: bucket.tokens as i32,

reset: (bucket.capacity - bucket.tokens) / bucket.refill_rate,

})

} else {

Err(AppError::RateLimitExceeded)

}

}

}

Limits:

Burst: 100 requests

Sustained: 10 req/s

Scope: Per tenant

Headers returned:

X-RateLimit-Limit: 100

X-RateLimit-Remaining: 73

X-RateLimit-Reset: 2.7

Security Checklist

Before going to production, I verified:

✅ All endpoints require authentication (except health check)

✅ JWT signatures validated against Keycloak JWKS

✅ Tenant isolation enforced (client_id in every query)

✅ Audit logging on all operations

✅ Sensitive data sanitized in logs

✅ Rate limiting per tenant

✅ Database URLs encrypted at rest

✅ No secrets in logs (URL sanitization)

✅ HTTPS enforced (TLS 1.3)

✅ CORS configured (whitelist only)

What I Learned

✅ What Worked:

Keycloak handles the hard stuff (MFA, SAML, etc.)

Audit logs helps security review in the future

Rate limiting prevent buggy client or attacs from taking us down

❌ What Didn't:

Initial design logged full JWT tokens (oops!)

Forgot to sanitize database URLs in error messages

🔧 Improvements Made:

Added comprehensive sanitization

Implemented circuit breakers per tenant

Up Next: The Admin Panel

Security is invisible to users. The admin panel is where they actually interact with the system.

In Part 5, we'll build a Next.js admin interface that lets tenants configure mappings without writing code (or calling me at 2 AM).

Security Resources:

Discussion: How do you handle audit logging in your systems? What's your retention policy? Let's discuss!