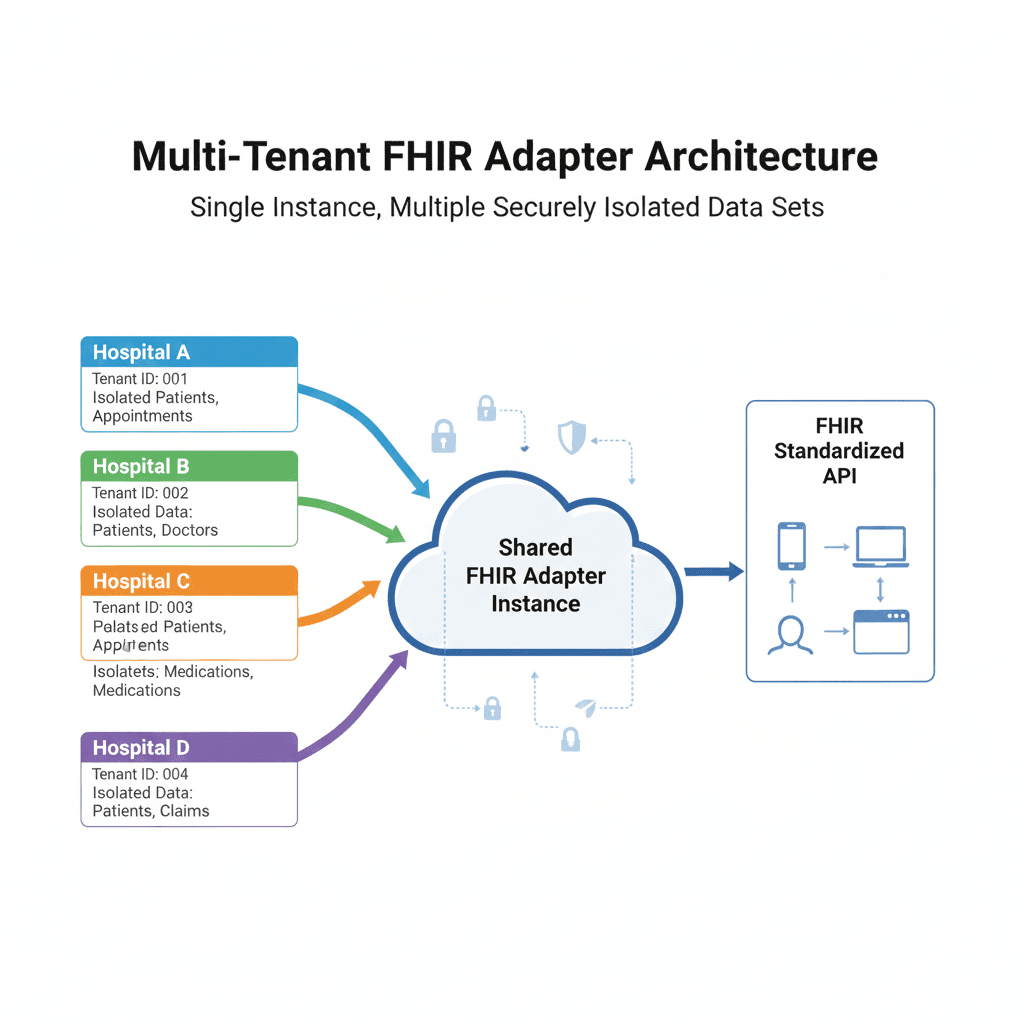

Multi-Tenant Architecture: Isolating Healthcare Data at Scale

The Multi-Tenancy Challenge

Imagine you're running a SaaS application. Now imagine that application handles the most sensitive data possible: medical records. Now imagine that a single bug could expose Patient A's cancer diagnosis to Hospital B.

That's the stakes we're playing with in a multi-tenant healthcare system.

Most SaaS apps solve multi-tenancy at the application layer—same database, different tenant_id columns. That works for Slack or Notion. It doesn't work for healthcare.

Here's why:

Regulatory compliance: HIPAA requires strong data isolation

Different schemas: Hospital A stores patient names as

patient_name, Hospital B usesfull_nameLegacy systems: Each tenant runs their own ancient MySQL database on-premise

Zero trust: One tenant's data must be physically impossible to access from another tenant's queries

The Architecture: Database-Level Isolation

Instead of logical isolation (same DB, different rows), I went with physical isolation—each tenant gets their own database connection to their own database.

// Each tenant has their own encrypted database URL

pub struct TenantDatabaseConfig {

pub id: i32,

pub client_id: String,

pub database_url: String, // Encrypted

pub database_type: DatabaseType,

}

The Connection Pool Dance

Here's the tricky part: you can't create a new database connection for every request. Connections are expensive (100ms+ handshake). But you also can't keep 1000 connection pools open if you have 1000 tenants.

I built a TenantPoolManager that:

Lazily creates connection pools (only when needed)

Caches them in memory for fast access

Evicts idle pools after 30 minutes of inactivity

Validates connections before returning them

pub struct TenantPoolManager {

pools: Arc<Mutex<HashMap<String, DatabasePool>>>,

encryption_key: Vec<u8>,

}

impl TenantPoolManager {

pub async fn get_pool(&self, client_id: &str) -> Result<DatabasePool> {

// Check cache first

{

let pools = self.pools.lock().unwrap();

if let Some(pool) = pools.get(client_id) {

return Ok(pool.clone());

}

}

// Not cached - fetch config, decrypt URL, create pool

let config = self.fetch_tenant_config(client_id).await?;

let decrypted_url = self.decrypt_database_url(&config.database_url)?;

let pool = self.create_pool(&decrypted_url).await?;

// Cache it

let mut pools = self.pools.lock().unwrap();

pools.insert(client_id.to_string(), pool.clone());

Ok(pool)

}

}

Encryption

Database URLs contain credentials. Storing them in plaintext would be... unwise.

Every tenant's database URL is encrypted using AES-256-GCM before hitting the database:

fn encrypt_database_url(&self, url: &str) -> Result<String> {

let cipher = Aes256Gcm::new(Key::<Aes256Gcm>::from_slice(&self.encryption_key));

let nonce = Aes256Gcm::generate_nonce(&mut OsRng);

let ciphertext = cipher.encrypt(&nonce, url.as_bytes())

.map_err(|e| AdapterError::Encryption(e.to_string()))?;

// Encode as base64 for storage

let mut combined = nonce.to_vec();

combined.extend_from_slice(&ciphertext);

Ok(base64::encode(&combined))

}

The encryption key lives in environment variables, never in the database.

Configuration Caching: Speed vs Consistency

Each tenant has a configuration:

Which FHIR resources they support

How to map FHIR paths to database columns

Custom transformations (e.g., "1"/"0" → true/false)

Nested array handling strategies

Loading this from MySQL on every request would be slow (5-10ms per query). But caching it forever means configuration changes don't take effect.

I built a ConfigResolver with a TTL-based cache:

pub struct ConfigResolver {

cache: Arc<Mutex<HashMap<String, CachedConfig>>>,

cache_ttl: Duration,

}

struct CachedConfig {

config: TenantConfig,

loaded_at: Instant,

}

impl ConfigResolver {

pub async fn get_config(&self, client_id: &str) -> Result<TenantConfig> {

// Check cache

{

let cache = self.cache.lock().unwrap();

if let Some(cached) = cache.get(client_id) {

if cached.loaded_at.elapsed() < self.cache_ttl {

return Ok(cached.config.clone());

}

}

}

// Cache miss or expired - reload

let config = self.load_from_database(client_id).await?;

let mut cache = self.cache.lock().unwrap();

cache.insert(client_id.to_string(), CachedConfig {

config: config.clone(),

loaded_at: Instant::now(),

});

Ok(config)

}

// Admin panel calls this after configuration changes

pub async fn invalidate(&self, client_id: &str) {

let mut cache = self.cache.lock().unwrap();

cache.remove(client_id);

}

}

Authentication: Keycloak Integration

Multi-tenancy isn't just about data—it's about who can access that data.

I integrated Keycloak for enterprise SSO:

Client ID embedded in JWT: Every request includes

client_idclaimRole-based access:

fhir-read,fhir-write,fhir-admin

The authentication flow:

User logs in via Keycloak

Every request to the adapter includes this JWT

Middleware validates JWT and extracts

client_idAll database queries are scoped to that

client_id

Keycloak issues JWT with claims:

{

"sub": "user-123",

"client_id": "tenant-42",

"realm_access": {

"roles": ["fhir-read", "fhir-write"]

}

}

No way to query across tenants. The client_id is the security boundary.

// Middleware that extracts and validates tenant context

pub async fn auth_middleware(

State(state): State<AppState>,

req: Request,

next: Next,

) -> Result<Response, AppError> {

let token = extract_bearer_token(&req)?;

// Validate JWT signature and expiration

let claims = state.keycloak.validate_token(&token).await?;

// Extract client_id - this determines data isolation

let client_id = claims.client_id

.ok_or_else(|| AppError::Unauthorized("Missing client_id"))?;

// Inject into request extensions for downstream handlers

req.extensions_mut().insert(AuthContext {

user_id: claims.sub,

client_id,

roles: claims.realm_access.roles,

});

Ok(next.run(req).await)

}

Lessons Learned

✅ What Worked

Database-level isolation: No worrying about leaked

WHEREclausesLazy pool creation: Most tenants are idle most of the time

TTL-based config cache: 5-minute TTL is a sweet spot

❌ What Didn't

Initial design had no pool eviction: Memory grew unbounded

Encrypted URLs in logs: Accidentally logged encrypted URLs (look like gibberish, but still bad)

No circuit breakers: One tenant's broken DB took down the whole adapter

🔧 Improvements Made

Added pool eviction after 30 min idle

Sanitized all logging (URLs, credentials, PHI)

Per-tenant circuit breakers (now one tenant can fail without affecting others)

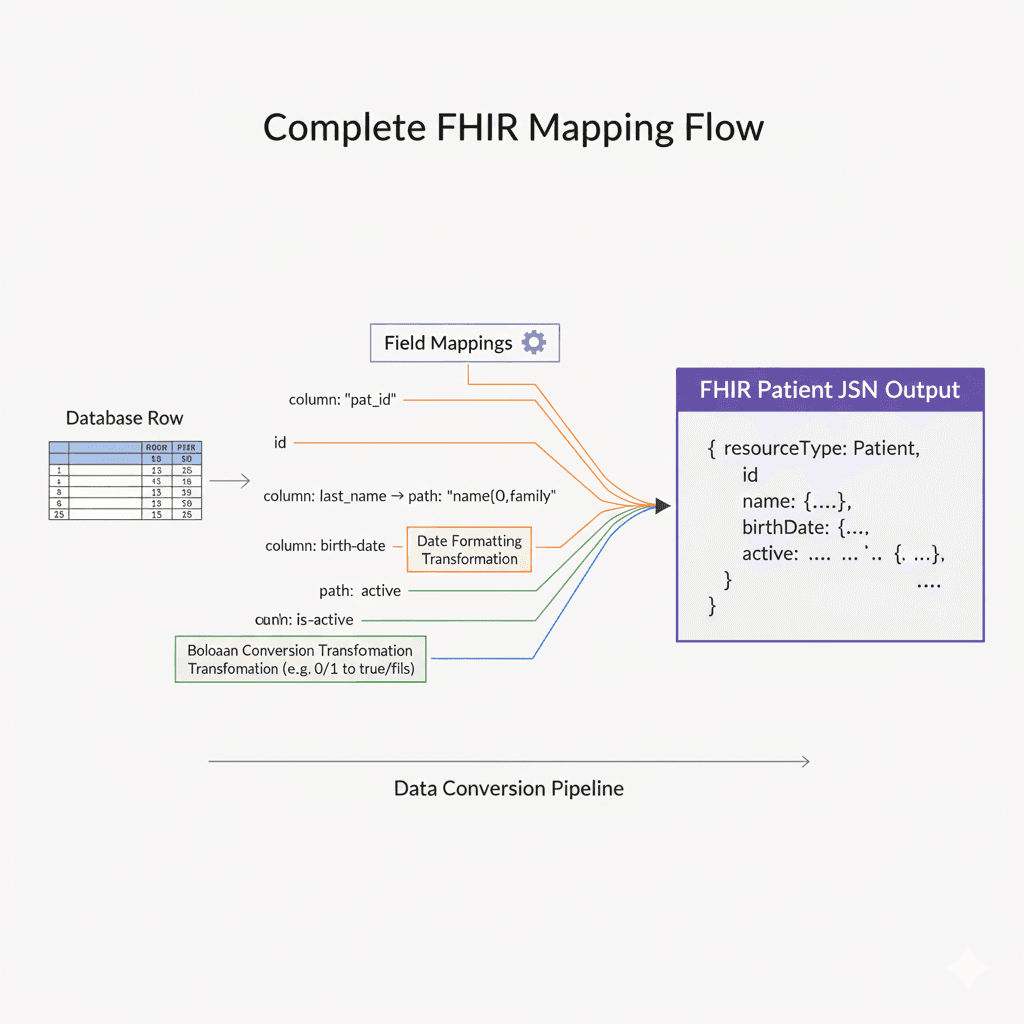

Up Next: The Mapping Engine

Physical isolation solves security. But how do we handle the fact that Hospital A stores patient names as patient_name while Hospital B uses nombre_paciente?

That's where the dynamic mapping engine comes in.

Part 3 will dive deep into how the adapter translates arbitrary database schemas into standard FHIR resources at runtime—without code generation.

Discussion: How do you handle multi-tenancy in your systems? Physical vs logical isolation? Let me know your thoughts.